GoodData Platform Overview

The GoodData platform is a cloud-based end-to-end analytics tool for capturing and loading data from numerous and diverse data sources and then enabling users to build metrics, create reports and dashboards.

Thanks to the GoodData platform’s modular nature and scalability, you can build your own data pipeline, design your own logical data model, and deliver customized and reusable analytics.

With the GoodData platform, you have access to powerful SDK tools and a rich REST API library (see API Reference).

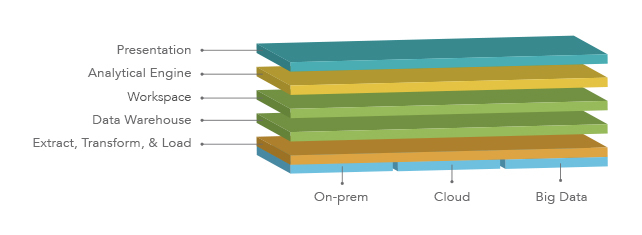

GoodData Platform Layers

GoodData platform comprises the following layers:

Presentation: Called the GoodData Portal, the presentation layer of the GoodData platform is an intuitive web interface for building and reviewing metrics, dashboards, and insights. Authenticated users can access the GoodData Portal from any modern web browser with JavaScript enabled. The presentation layer accesses the platform via APIs. All platform functionality is available through these APIs, which enables seamless integration with other enterprise systems. For more information, see GoodData Portal.

Analytical Engine: Queries submitted from the GoodData Portal are passed through the analytical engine which breaks them down into smaller sets of queries for performance optimization and caching. These queries are submitted to the workspace for processing.

Workspace: In GoodData, a workspace contains all the loaded data and metadata (metrics, insights, and dashboards) for a single subject area.

Data Warehouse: The underlying data warehouse contains all of your domain’s data and is used for feeding workspaces. For more information, see Data Warehouse.

Extract, Transform & Load (ETL): ETL processes are used to acquire data from source systems, consolidate the data, and load it into datasets within the GoodData platform. For more information, see Data Preparation and Distribution.

Platform Architecture

GoodData is a multi-tenant platform that features hundreds of asynchronous services that are integrated together to ensure efficient distribution, failover, and security.

Data Storage

Depending on customer requirements, the GoodData platform can integrate with a variety of databases for secure storage and access to data.

All customer data is stored in a data warehouse called a domain (formerly known as an “organization”). Within a domain, there are individual workspaces and their data, users, ETL processes (see Your GoodData Domain). For more information on terminology, see GoodData Glossary.

Data Modeling

In the platform, data models are segmented into two components: the logical data model and the physical data model.

A logical data model describes the attributes and facts contained in each dataset, as well as the relationships between these objects. When the logical data model is deployed to a workspace in the platform, it is used to create or update the physical data model, which describes the tables in the data warehouse used to store loaded data.

Incoming ETL data is written to the physical data model using the logical data model. Similarly, queries for workspace data from the GoodData Portal are retrieved through the logical data model.

Logical data models are created in the LDM Modeler (see Data Modeling in GoodData).

Data Loading

Data can be loaded into a workspace from virtually any well-organized data source via ETL processes. These processes can be created to Extract source data from a variety of formats, including flat file, database, and JSON via API, Transform it as needed to clean, combine, and streamline the inputs, and Load it into the designated workspace. For more information, see Data Preparation and Distribution.

You can monitor deployed ETL processes through the Data Integration Console. See Data Integration Console Reference.

Data Access

All customer data is secured and localized to the domain belonging to the customer. A user cannot access any data stored in the platform that exists outside the domain in which the user has been created.

Most users interact with the datastore through the GoodData Portal, a web application that enables users to view or modify the workspace that have been created for them. See GoodData Portal.

Access to the GoodData Portal features is governed by the role assigned to each user. For details, see User Roles.

Queries for data from the datastore are passed through the Extensible Analytics Engine for high-performance and scalable retrieval of workspace assets. These queries are created by a request from the Portal or by a user entry in MAQL, a proprietary querying language. See MAQL - Analytical Query Language.

Security

For information about GoodData platform security, see Platform Security and Compliance.