Create the Output Stage based on Your Logical Data Model

For workspace administrators only

Creating the Output Stage as described in the following procedure (that is, directly from the LDM Modeler) is available only for cloud data warehouses (for example, Snowflake or Redshift). If you are using the GoodData Agile Data Warehousing Service (ADS), you need to use the API to create the Output Stage.

If you upload data from a data warehouse (for example, Snowflake or Redshift) and use (or want to use) the Output Stage as a source for loading data to your workspaces (see Automated Data Distribution v2 for Data Warehouses), you can generate the Output Stage based on the logical date model (LDM). Whenever you update the LDM, recreate the Output Stage so that it matches the LDM.

The GoodData platform scans the LDM and suggests SQL queries that you then execute on your schema to create the Output Stage. The Output Stage follows the naming convention as described in Naming Convention for Output Stage Objects in Automated Data Distribution v2 for Data Warehouses.

Before creating the Output Stage, make sure that your LDM is ready and there are no unpublished changes.

You can also create the Output Stage based on your data warehouse schema (see Create the Output Stage based on Your Data Warehouse Schema).

Steps:

On the top navigation bar, select Data. The LDM Modeler opens in view mode.

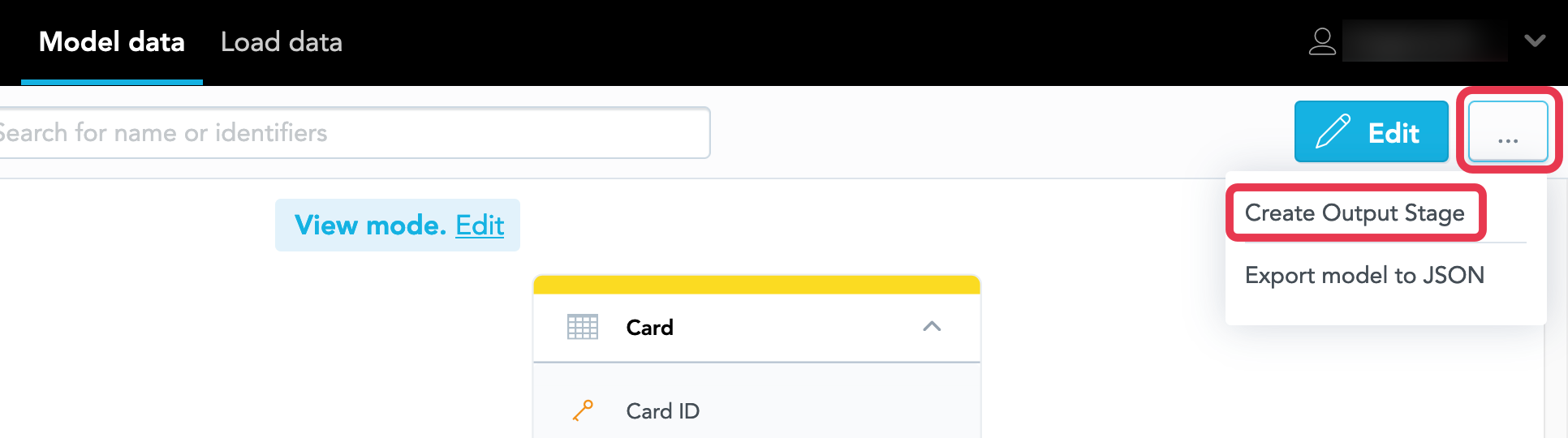

Click the menu button on the top right, and click Create Output Stage.

Select the Data Source that you want to create the Output Stage for, and select the SQL command that you want to generate. You can choose from creating or altering tables and creating views.

You can select only from the Data Sources that have the Output Stage prefix specified (see Create a Data Source).Click Create. The creating process starts. When the process completes, the dialog shows the SQL queries that you can use to create the Output Stage.

Review the suggested queries and modify them as needed. Once done, execute the queries on your schema to create the Output Stage. The Output Stage is created.

If you do not have data load processes set up yet, you can now deploy processes for loading data (see Deploy a Data Loading Process for Automated Data Distribution v2) and schedule them to load data regularly (see Schedule a Data Load).

You can also generate the Output Stage from an LDM via the API.