Loading Data to Data Warehouse Staging Tables through CloudConnect

CloudConnect is a legacy tool and will be discontinued. We recommend that to prepare your data you use the GoodData data pipeline as described in Data Preparation and Distribution. For data modeling, see Data Modeling in GoodData to learn how to work with Logical Data Modeler.

Using a separate DbExecute component, you can use the COPY LOCAL command to populate your staging tables with data from a locally referenced file.

- For more information on the COPY LOCAL command, see Use COPY FROM LOCAL to Load Data.

- For more information on creating the staging tables, see Creating Tables in Data Warehouse from CloudConnect.

In the following example, you create a DbExecute instance to load the staging table for in_opportunities from the local file opportunities.csv.

Steps:

In the graph, add a DBExecute component.

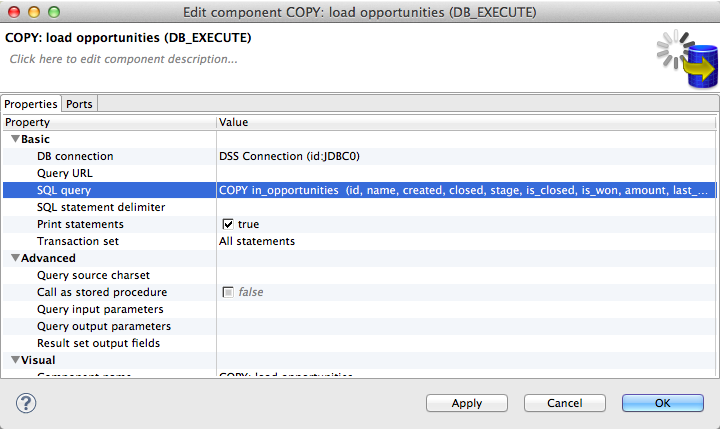

Edit the component in the following way:

DB Connection: select the connection that you created

SQL query: Specify at least two commands in the following order, separated by a semicolon:

- The TRUNCATE command to ensure that the staging table is empty before copying into the table

- The COPY LOCAL commands to copy from the local source file (opportunities.csv in this case) to the staging table

TRUNCATE in_opportunities;COPY in_opportunities (id, name, created, closed, stage, is_closed, is_won, amount, last_modified) FROM LOCAL '${DATA_SOURCE_DIR}/opportunities.csv' SKIP 1 ABORT ON ERRORPrint statements: true

Transaction set: All statements

Your DBExecute component looks like the following:

Save your graph.